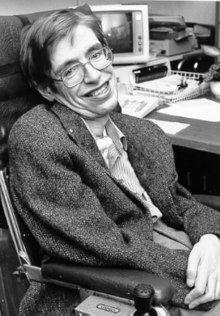

Stephen Hawking is one of the most famous people using

speech synthesis to communicate

Speech synthesis is the artificial production of human

speech.

A computer system used for this purpose is called a speech

synthesizer, and can be implemented in

software or

hardware products. A text-to-speech (TTS) system converts

normal language text into speech; other systems render

symbolic linguistic representations like

phonetic transcriptions into speech.[1]

Synthesized speech can be created by concatenating pieces of recorded

speech that are stored in a

database. Systems differ in the size of the stored speech units; a

system that stores

phones or

diphones

provides the largest output range, but may lack clarity. For specific

usage domains, the storage of entire words or sentences allows for

high-quality output. Alternatively, a synthesizer can incorporate a

model of the

vocal tract and other human voice characteristics to create a

completely "synthetic" voice output.[2]

The quality of a speech synthesizer is judged by its similarity to

the human voice and by its ability to be understood. An intelligible

text-to-speech program allows people with

visual impairments or

reading disabilities to listen to written works on a home computer.

Many computer operating systems have included speech synthesizers since

the early 1990s.

Overview of a typical TTS system

A text-to-speech system (or "engine") is composed of two parts:[3]

a

front-end and a

back-end. The front-end has two major tasks. First, it converts raw

text containing symbols like numbers and abbreviations into the

equivalent of written-out words. This process is often called text

normalization, pre-processing, or

tokenization. The front-end then assigns

phonetic transcriptions to each word, and divides and marks the text

into

prosodic units, like

phrases,

clauses,

and

sentences. The process of assigning phonetic transcriptions to words

is called text-to-phoneme or

grapheme-to-phoneme conversion. Phonetic transcriptions and

prosody information together make up the symbolic linguistic

representation that is output by the front-end. The back-end—often

referred to as the synthesizer—then converts the symbolic

linguistic representation into sound. In certain systems, this part

includes the computation of the target prosody (pitch contour,

phoneme durations),[4]

which is then imposed on the output speech.

History

Long before

electronic

signal processing was invented, there were those who tried to build

machines to create human speech. Some early legends of the existence of

"speaking heads" involved

Gerbert of Aurillac (d. 1003 AD),

Albertus Magnus (1198–1280), and

Roger Bacon (1214–1294).

In 1779, the

Danish

scientist Christian Kratzenstein, working at the

Russian Academy of Sciences, built models of the human

vocal tract that could produce the five long

vowel

sounds (in

International Phonetic Alphabet notation, they are

[aː],

[eː],

[iː],

[oː] and

[uː]).[5]

This was followed by the

bellows-operated

"acoustic-mechanical

speech machine" by

Wolfgang von Kempelen of

Pressburg,

Hungary,

described in a 1791 paper.[6]

This machine added models of the tongue and lips, enabling it to produce

consonants as well as vowels. In 1837,

Charles Wheatstone produced a "speaking machine" based on von

Kempelen's design, and in 1857, M. Faber built the "Euphonia".

Wheatstone's design was resurrected in 1923 by Paget.[7]

In the 1930s,

Bell

Labs developed the

vocoder,

which automatically analyzed speech into its fundamental tone and

resonances. From his work on the vocoder,

Homer Dudley developed a manually keyboard-operated voice

synthesizer called

The

Voder (Voice Demonstrator), which he exhibited at the

1939 New York World's Fair.

The

Pattern playback was built by

Dr. Franklin S. Cooper and his colleagues at

Haskins Laboratories in the late 1940s and completed in 1950. There

were several different versions of this hardware device but only one

currently survives. The machine converts pictures of the acoustic

patterns of speech in the form of a spectrogram back into sound. Using

this device,

Alvin Liberman and colleagues were able to discover acoustic cues

for the perception of

phonetic segments (consonants and vowels).

Dominant systems in the 1980s and 1990s were the MITalk system, based

largely on the work of Dennis Klatt at MIT, and the Bell Labs system;[8]

the latter was one of the first multilingual language-independent

systems, making extensive use of

natural language processing methods.

Early electronic speech synthesizers sounded robotic and were often

barely intelligible. The quality of synthesized speech has steadily

improved, but output from contemporary speech synthesis systems is still

clearly distinguishable from actual human speech.

As the

cost-performance ratio causes speech synthesizers to become cheaper

and more accessible to the people, more people will benefit from the use

of text-to-speech programs.[9]

Electronic devices

The first computer-based speech synthesis systems were created in the

late 1950s. The first general English text-to-speech system was

developed by Noriko Umeda et al. in 1968 at the Electrotechnical

Laboratory, Japan.[10]

In 1961, physicist

John Larry Kelly, Jr and colleague Louis Gerstman[11]

used an

IBM 704 computer to synthesize speech, an event among the most

prominent in the history of

Bell

Labs. Kelly's voice recorder synthesizer (vocoder)

recreated the song "Daisy

Bell", with musical accompaniment from

Max Mathews. Coincidentally,

Arthur C. Clarke was visiting his friend and colleague John Pierce

at the Bell Labs Murray Hill facility. Clarke was so impressed by the

demonstration that he used it in the climactic scene of his screenplay

for his novel

2001: A Space Odyssey,[12]

where the

HAL

9000 computer sings the same song as it is being put to sleep by

astronaut

Dave Bowman.[13]

Despite the success of purely electronic speech synthesis, research is

still being conducted into mechanical speech synthesizers.[14]

Handheld electronics featuring speech synthesis began emerging in

the 1970s. One of the first was the

Telesensory Systems Inc. (TSI) Speech+ portable calculator

for the blind in 1976.[15][16]

Other devices were produced primarily for educational purposes, such as

Speak & Spell, produced by

Texas Instruments in 1978.[17]

Fidelity released a speaking version of its electronic chess computer in

1979.[18]

The first

video game to feature speech synthesis was the 1980

shoot 'em up

arcade game,

Stratovox, from

Sun

Electronics.[19]

Another early example was the arcade version of

Berzerk,

released that same year. The first multi-player

electronic game using voice synthesis was

Milton from

Milton Bradley Company, which produced the device in 1980.

Synthesizer

technologies

The most important qualities of a speech synthesis system are

naturalness and

intelligibility.[citation

needed] Naturalness describes how closely the

output sounds like human speech, while intelligibility is the ease with

which the output is understood. The ideal speech synthesizer is both

natural and intelligible. Speech synthesis systems usually try to

maximize both characteristics.

The two primary technologies for generating synthetic speech

waveforms are concatenative synthesis and

formant

synthesis. Each technology has strengths and weaknesses, and the

intended uses of a synthesis system will typically determine which

approach is used.

Concatenative

synthesis

Concatenative synthesis is based on the

concatenation (or stringing together) of segments of recorded

speech. Generally, concatenative synthesis produces the most

natural-sounding synthesized speech. However, differences between

natural variations in speech and the nature of the automated techniques

for segmenting the waveforms sometimes result in audible glitches in the

output. There are three main sub-types of concatenative synthesis.

Unit

selection synthesis

Unit selection synthesis uses large

databases of recorded speech. During database creation, each

recorded utterance is segmented into some or all of the following:

individual

phones,

diphones,

half-phones,

syllables,

morphemes,

words,

phrases, and

sentences. Typically, the division into segments is done using a

specially modified

speech recognizer set to a "forced alignment" mode with some manual

correction afterward, using visual representations such as the

waveform and

spectrogram.[20]

An

index of the units in the speech database is then created based on

the segmentation and acoustic parameters like the

fundamental frequency (pitch),

duration, position in the syllable, and neighboring phones. At

run time, the desired target utterance is created by determining the

best chain of candidate units from the database (unit selection). This

process is typically achieved using a specially weighted

decision tree.

Unit selection provides the greatest naturalness, because it applies

only a small amount of

digital signal processing (DSP) to the recorded speech. DSP often

makes recorded speech sound less natural, although some systems use a

small amount of signal processing at the point of concatenation to

smooth the waveform. The output from the best unit-selection systems is

often indistinguishable from real human voices, especially in contexts

for which the TTS system has been tuned. However, maximum naturalness

typically require unit-selection speech databases to be very large, in

some systems ranging into the

gigabytes of recorded data, representing dozens of hours of speech.[21]

Also, unit selection algorithms have been known to select segments from

a place that results in less than ideal synthesis (e.g. minor words

become unclear) even when a better choice exists in the database.[22]

Recently, researchers have proposed various automated methods to detect

unnatural segments in unit-selection speech synthesis systems.[23]

Diphone synthesis

Diphone synthesis uses a minimal speech database containing all the

diphones

(sound-to-sound transitions) occurring in a language. The number of

diphones depends on the

phonotactics of the language: for example, Spanish has about 800

diphones, and German about 2500. In diphone synthesis, only one example

of each diphone is contained in the speech database. At runtime, the

target

prosody of a sentence is superimposed on these minimal units by

means of

digital signal processing techniques such as

linear predictive coding,

PSOLA[24]

or MBROLA.[25]

Diphone synthesis suffers from the sonic glitches of concatenative

synthesis and the robotic-sounding nature of formant synthesis, and has

few of the advantages of either approach other than small size. As such,

its use in commercial applications is declining,[citation

needed] although it continues to be used in

research because there are a number of freely available software

implementations.

Domain-specific synthesis

Domain-specific synthesis concatenates prerecorded words and phrases

to create complete utterances. It is used in applications where the

variety of texts the system will output is limited to a particular

domain, like transit schedule announcements or weather reports.[26]

The technology is very simple to implement, and has been in commercial

use for a long time, in devices like talking clocks and calculators. The

level of naturalness of these systems can be very high because the

variety of sentence types is limited, and they closely match the prosody

and intonation of the original recordings.[citation

needed]

Because these systems are limited by the words and phrases in their

databases, they are not general-purpose and can only synthesize the

combinations of words and phrases with which they have been

preprogrammed. The blending of words within naturally spoken language

however can still cause problems unless the many variations are taken

into account. For example, in

non-rhotic dialects of English the "r" in words like

"clear"

/ˈklɪə/ is usually only pronounced when the following word has a

vowel as its first letter (e.g. "clear out" is realized as

/ˌklɪəɾˈʌʊt/). Likewise in

French, many final consonants become no longer silent if followed by

a word that begins with a vowel, an effect called

liaison. This

alternation cannot be reproduced by a simple word-concatenation

system, which would require additional complexity to be

context-sensitive.

Formant synthesis

Formant synthesis does not use human speech samples at runtime.

Instead, the synthesized speech output is created using

additive synthesis and an acoustic model (physical

modelling synthesis).[27]

Parameters such as

fundamental frequency,

voicing, and

noise

levels are varied over time to create a

waveform of artificial speech. This method is sometimes called

rules-based synthesis; however, many concatenative systems also have

rules-based components. Many systems based on formant synthesis

technology generate artificial, robotic-sounding speech that would never

be mistaken for human speech. However, maximum naturalness is not always

the goal of a speech synthesis system, and formant synthesis systems

have advantages over concatenative systems. Formant-synthesized speech

can be reliably intelligible, even at very high speeds, avoiding the

acoustic glitches that commonly plague concatenative systems. High-speed

synthesized speech is used by the visually impaired to quickly navigate

computers using a

screen reader. Formant synthesizers are usually smaller programs

than concatenative systems because they do not have a database of speech

samples. They can therefore be used in

embedded systems, where

memory and

microprocessor power are especially limited. Because formant-based

systems have complete control of all aspects of the output speech, a

wide variety of prosodies and

intonations can be output, conveying not just questions and

statements, but a variety of emotions and tones of voice.

Examples of non-real-time but highly accurate intonation control in

formant synthesis include the work done in the late 1970s for the

Texas Instruments toy

Speak & Spell, and in the early 1980s

Sega

arcade machines[28]

and in many

Atari, Inc. arcade games[29]

using the

TMS5220 LPC Chips. Creating proper intonation for these projects was

painstaking, and the results have yet to be matched by real-time

text-to-speech interfaces.[30]

Articulatory

synthesis

Articulatory synthesis refers to computational techniques for

synthesizing speech based on models of the human

vocal tract and the articulation processes occurring there. The

first articulatory synthesizer regularly used for laboratory experiments

was developed at

Haskins Laboratories in the mid-1970s by

Philip Rubin, Tom Baer, and Paul Mermelstein. This synthesizer,

known as ASY, was based on vocal tract models developed at

Bell Laboratories in the 1960s and 1970s by Paul Mermelstein, Cecil

Coker, and colleagues.

Until recently, articulatory synthesis models have not been

incorporated into commercial speech synthesis systems. A notable

exception is the

NeXT-based

system originally developed and marketed by Trillium Sound Research, a

spin-off company of the

University of Calgary, where much of the original research was

conducted. Following the demise of the various incarnations of NeXT

(started by

Steve Jobs in the late 1980s and merged with Apple Computer in

1997), the Trillium software was published under the

GNU General Public License, with work continuing as

gnuspeech. The system, first marketed in 1994, provides full

articulatory-based text-to-speech conversion using a waveguide or

transmission-line analog of the human oral and nasal tracts controlled

by Carré's "distinctive region model".

HMM-based

synthesis

HMM-based synthesis is a synthesis method based on

hidden Markov models, also called Statistical Parametric Synthesis.

In this system, the

frequency spectrum (vocal

tract),

fundamental frequency (vocal source), and duration (prosody)

of speech are modeled simultaneously by HMMs. Speech

waveforms are generated from HMMs themselves based on the

maximum likelihood criterion.[31]

Sinewave synthesis

Sinewave synthesis is a technique for synthesizing speech by

replacing the

formants (main bands of energy) with pure tone whistles.[32]

Challenges

Text

normalization challenges

The process of normalizing text is rarely straightforward. Texts are

full of

heteronyms,

numbers,

and

abbreviations that all require expansion into a phonetic

representation. There are many spellings in English which are pronounced

differently based on context. For example, "My latest project is to

learn how to better project my voice" contains two pronunciations of

"project".

Most text-to-speech (TTS) systems do not generate semantic

representations of their input texts, as processes for doing so are not

reliable, well understood, or computationally effective. As a result,

various

heuristic techniques are used to guess the proper way to

disambiguate homographs, like examining neighboring words and using

statistics about frequency of occurrence.

Recently TTS systems have begun to use HMMs (discussed above) to

generate "parts of speech" to aid in disambiguating homographs. This

technique is quite successful for many cases such as whether "read"

should be pronounced as "red" implying past tense, or as "reed" implying

present tense. Typical error rates when using HMMs in this fashion are

usually below five percent. These techniques also work well for most

European languages, although access to required training corpora is

frequently difficult in these languages.

Deciding how to convert numbers is another problem that TTS systems

have to address. It is a simple programming challenge to convert a

number into words (at least in English), like "1325" becoming "one

thousand three hundred twenty-five." However, numbers occur in many

different contexts; "1325" may also be read as "one three two five",

"thirteen twenty-five" or "thirteen hundred and twenty five". A TTS

system can often infer how to expand a number based on surrounding

words, numbers, and punctuation, and sometimes the system provides a way

to specify the context if it is ambiguous.[33]

Roman numerals can also be read differently depending on context. For

example "Henry VIII" reads as "Henry the Eighth", while "Chapter VIII"

reads as "Chapter Eight".

Similarly, abbreviations can be ambiguous. For example, the

abbreviation "in" for "inches" must be differentiated from the word

"in", and the address "12 St John St." uses the same abbreviation for

both "Saint" and "Street". TTS systems with intelligent front ends can

make educated guesses about ambiguous abbreviations, while others

provide the same result in all cases, resulting in nonsensical (and

sometimes comical) outputs, such as "co-operation" being rendered as

"company operation".

Text-to-phoneme challenges

Speech synthesis systems use two basic approaches to determine the

pronunciation of a word based on its

spelling, a process which is often called text-to-phoneme or

grapheme-to-phoneme conversion (phoneme

is the term used by linguists to describe distinctive sounds in a

language). The simplest approach to text-to-phoneme conversion is the

dictionary-based approach, where a large dictionary containing all the

words of a language and their correct pronunciations is stored by the

program. Determining the correct pronunciation of each word is a matter

of looking up each word in the dictionary and replacing the spelling

with the pronunciation specified in the dictionary. The other approach

is rule-based, in which pronunciation rules are applied to words to

determine their pronunciations based on their spellings. This is similar

to the "sounding out", or

synthetic phonics, approach to learning reading.

Each approach has advantages and drawbacks. The dictionary-based

approach is quick and accurate, but completely fails if it is given a

word which is not in its dictionary.[citation

needed] As dictionary size grows, so too does the

memory space requirements of the synthesis system. On the other hand,

the rule-based approach works on any input, but the complexity of the

rules grows substantially as the system takes into account irregular

spellings or pronunciations. (Consider that the word "of" is very common

in English, yet is the only word in which the letter "f" is pronounced

[v].) As a result, nearly all speech synthesis systems use a combination

of these approaches.

Languages with a

phonemic orthography have a very regular writing system, and the

prediction of the pronunciation of words based on their spellings is

quite successful. Speech synthesis systems for such languages often use

the rule-based method extensively, resorting to dictionaries only for

those few words, like foreign names and borrowings, whose pronunciations

are not obvious from their spellings. On the other hand, speech

synthesis systems for languages like

English, which have extremely irregular spelling systems, are more

likely to rely on dictionaries, and to use rule-based methods only for

unusual words, or words that aren't in their dictionaries.

Evaluation

challenges

The consistent evaluation of speech synthesis systems may be

difficult because of a lack of universally agreed objective evaluation

criteria. Different organizations often use different speech data. The

quality of speech synthesis systems also depends to a large degree on

the quality of the production technique (which may involve analogue or

digital recording) and on the facilities used to replay the speech.

Evaluating speech synthesis systems has therefore often been compromised

by differences between production techniques and replay facilities.

Recently, however, some researchers have started to evaluate speech

synthesis systems using a common speech dataset.[34]

Prosodics and emotional content

A study in the journal Speech Communication by Amy Drahota and

colleagues at the

University of Portsmouth,

UK, reported that listeners to voice recordings could determine, at

better than chance levels, whether or not the speaker was smiling.[35][36][37]

It was suggested that identification of the vocal features that signal

emotional content may be used to help make synthesized speech sound more

natural.

Dedicated hardware

Early Technology (Not available)

Current (as of 2013)

- Magnevation SpeakJet (www.speechchips.com) TTS256 Hobby and

experimenter.

- Epson S1V30120F01A100 (www.epson.com) IC DECTalk Based voice,

Robotic, Eng/Spanish

-

Textspeak TTS-EM (www.textspeak.com) ICs, Modules and Industrial

enclosures in 24 languages. Human sounding, Phoneme based.

Computer operating systems or outlets with speech synthesis

Atari

Arguably, the first speech system integrated into an

operating system was the 1400XL/1450XL personal computers designed

by

Atari, Inc. using the Votrax SC01 chip in 1983. The 1400XL/1450XL

computers used a Finite State Machine to enable World English Spelling

text-to-speech synthesis.[39]

Unfortunately, the 1400XL/1450XL personal computers never shipped in

quantity.

The

Atari ST computers were sold with "stspeech.tos" on floppy disk.

Apple

The first speech system integrated into an

operating system that shipped in quantity was

Apple Computer's

MacInTalk in 1984. The software was licensed from 3rd party

developers Joseph Katz and Mark Barton (later, SoftVoice, Inc.) and was

featured during the 1984 introduction of the Macintosh computer. Since

the 1980s Macintosh Computers offered text to speech capabilities

through The MacinTalk software. In the early 1990s Apple expanded its

capabilities offering system wide text-to-speech support. With the

introduction of faster PowerPC-based computers they included higher

quality voice sampling. Apple also introduced

speech recognition into its systems which provided a fluid command

set. More recently, Apple has added sample-based voices. Starting as a

curiosity, the speech system of Apple

Macintosh has evolved into a fully supported program,

PlainTalk, for people with vision problems.

VoiceOver was for the first time featured in Mac OS X Tiger (10.4).

During 10.4 (Tiger) & first releases of 10.5 (Leopard) there was only

one standard voice shipping with Mac OS X. Starting with 10.6 (Snow

Leopard), the user can choose out of a wide range list of multiple

voices. VoiceOver voices feature the taking of realistic-sounding

breaths between sentences, as well as improved clarity at high read

rates over PlainTalk. Mac OS X also includes

say, a

command-line based application that converts text to audible speech.

The

AppleScript Standard Additions includes a

say verb that allows a script to use any of the installed voices and

to control the pitch, speaking rate and modulation of the spoken text.

The Apple

iOS operating system used on the iPhone, iPad and iPod Touch uses

VoiceOver speech synthesis for accessibility.[40]

Some third party applications also provide speech synthesis to

facilitate navigating, reading web pages or translating text.

AmigaOS

The second operating system with advanced speech synthesis

capabilities was

AmigaOS,

introduced in 1985. The voice synthesis was licensed by

Commodore International from SoftVoice, Inc., who also developed the

original MacinTalk text-to-speech system. It featured a complete system

of voice emulation, with both male and female voices and "stress"

indicator markers, made possible by advanced features of the

Amiga

hardware audio

chipset.[41]

It was divided into a narrator device and a translator library. Amiga

Speak Handler featured a text-to-speech translator. AmigaOS

considered speech synthesis a virtual hardware device, so the user could

even redirect console output to it. Some Amiga programs, such as word

processors, made extensive use of the speech system.

Microsoft Windows

Modern

Windows desktop systems can use

SAPI 4 and

SAPI 5 components to support speech synthesis and

speech recognition. SAPI 4.0 was available as an optional add-on for

Windows 95 and

Windows 98.

Windows 2000 added

Narrator, a text–to–speech utility for people who have visual

handicaps. Third-party programs such as

CoolSpeech,

Textaloud and

Ultra Hal can perform various text-to-speech tasks such as reading

text aloud from a specified website, email account, text document, the

Windows clipboard, the user's keyboard typing, etc. Not all programs can

use speech synthesis directly.[42]

Some programs can use plug-ins, extensions or add-ons to read text

aloud. Third-party programs are available that can read text from the

system clipboard.

Microsoft Speech Server is a server-based package for voice

synthesis and recognition. It is designed for network use with

web applications and

call centers.

Text-to-Speech (TTS) refers to the ability of computers

to read text aloud. A TTS Engine converts written text to a

phonemic representation, then converts the phonemic representation to

waveforms that can be output as sound. TTS engines with different

languages, dialects and specialized vocabularies are available through

third-party publishers.[43]

Android

Version 1.6 of

Android added support for speech synthesis (TTS).[44]

Internet

Currently, there are a number of

applications,

plugins and

gadgets

that can read messages directly from an

e-mail client and web pages from a

web browser or

Google Toolbar such as

Text-to-voice which is an add-on to

Firefox.

Some specialized

software can narrate

RSS-feeds. On

one hand, online RSS-narrators simplify information delivery by allowing

users to listen to their favourite news sources and to convert them to

podcasts.

On the other hand, on-line RSS-readers are available on almost any

PC connected to the Internet. Users can download generated audio

files to portable devices, e.g. with a help of

podcast

receiver, and listen to them while walking, jogging or commuting to

work.

A growing field in Internet based TTS is web-based

assistive technology, e.g. 'Browsealoud'

from a UK company and

Readspeaker. It can deliver TTS functionality to anyone (for reasons

of accessibility, convenience, entertainment or information) with access

to a web browser. The

non-profit project

Pediaphon was created in 2006 to provide a similar web-based TTS

interface to the

Wikipedia.[45]

Other work is being done in the context of the

W3C through the

W3C Audio Incubator Group with the involvement of The BBC and Google

Inc.

Others

- Some

e-book readers, such as the

Amazon Kindle,

Samsung E6,

PocketBook eReader Pro,

enTourage eDGe, and the Bebook Neo.

- Some models of Texas Instruments home computers produced in 1979

and 1981 (Texas

Instruments TI-99/4 and TI-99/4A) were capable of

text-to-phoneme synthesis or reciting complete words and phrases

(text-to-dictionary), using a very popular Speech Synthesizer

peripheral. TI used a proprietary

codec

to embed complete spoken phrases into applications, primarily video

games.[46]

- IBM's

OS/2 Warp 4 included VoiceType, a precursor to

IBM ViaVoice.

- Systems that operate on free and open source software systems

including

Linux are various, and include

open-source programs such as the

Festival Speech Synthesis System which uses diphone-based

synthesis (and can use a limited number of

MBROLA

voices), and

gnuspeech which uses articulatory synthesis[47]

from the

Free Software Foundation.

- Companies which developed speech synthesis systems but which are

no longer in this business include BeST Speech (bought by L&H),

Eloquent Technology (bought by SpeechWorks),

Lernout & Hauspie (bought by Nuance),

SpeechWorks (bought by Nuance), Rhetorical Systems (bought by

Nuance).

-

GPS Navigation units produced by

Garmin,

Magellan,

TomTom

and others use speech synthesis for automobile navigation.

Speech synthesis markup languages

A number of

markup languages have been established for the rendition of text as

speech in an XML-compliant

format. The most recent is

Speech Synthesis Markup Language (SSML), which became a

W3C recommendation in 2004. Older speech synthesis markup languages

include Java Speech Markup Language (JSML)

and SABLE.

Although each of these was proposed as a standard, none of them have

been widely adopted.

Speech synthesis markup languages are distinguished from dialogue

markup languages.

VoiceXML, for example, includes tags related to speech recognition,

dialogue management and touchtone dialing, in addition to text-to-speech

markup.

Applications

Speech synthesis has long been a vital

assistive technology tool and its application in this area is

significant and widespread. It allows environmental barriers to be

removed for people with a wide range of disabilities. The longest

application has been in the use of

screen readers for people with

visual impairment, but text-to-speech systems are now commonly used

by people with

dyslexia and other reading difficulties as well as by pre-literate

children. They are also frequently employed to aid those with severe

speech impairment usually through a dedicated

voice output communication aid.

Speech synthesis techniques are also used in entertainment

productions such as games and animations. In 2007, Animo Limited

announced the development of a software application package based on its

speech synthesis software FineSpeech, explicitly geared towards

customers in the entertainment industries, able to generate narration

and lines of dialogue according to user specifications.[48]

The application reached maturity in 2008, when NEC

Biglobe announced a web service that allows users to create phrases

from the voices of

Code Geass: Lelouch of the Rebellion R2 characters.[49]

In recent years, Text to Speech for disability and handicapped

communication aids have become widely deployed in Mass Transit. Text to

Speech is also finding new applications outside the disability market.

For example, speech synthesis, combined with

speech recognition, allows for interaction with mobile devices via

natural language processing interfaces.

See also